The evolution of infrastructure and control planes

Virtualization and cloud have forced the need for automation. In the “old” days, it would take weeks for a new physical server to arrive. There was little pressure to install and configure the operating system on it rapidly. We would insert a disc into the drive and then follow our checklist. A few days later, it would be ready to use.

But the ability to spin up new virtual machines (VMs) in minutes required us to get better at automating this process. Because we could effortlessly spin up new VMs, we found ourselves with an ever-growing portfolio of servers. The need to keep a constantly growing and changing number of servers up to date spawned new tools.

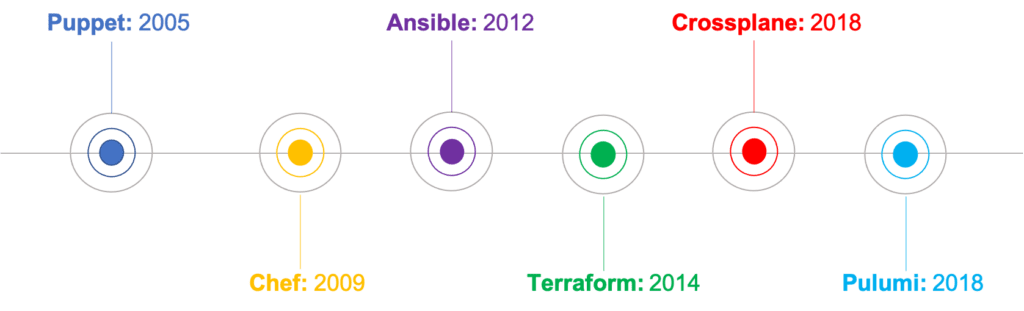

Tools like Ansible, Puppet, and Chef established a new category of infrastructure automation, and they were quickly adopted by organizations globally.

A few years later, local infrastructure was not fast enough, so companies started adopting cloud computing as an architectural option to help balance costs and speed.

With that movement, we again saw new tools emerging, such as Terraform and Docker. As microservices and Kubernetes become a viable option, we recently saw tools such as Crossplane and Pulumi being released and adopted.

Looking at the chart above, we can see that evolution in the infrastructure space happened fast and that continuous change is inevitable at this point. Still, all this happened to support an end goal: To deliver applications faster.

The impact caused on development

I believe it’s reasonable to say that the tools discussed above had two common goals:

- To automate infrastructure provisioning and management.

- To support application deployments.

While they shared the objectives above, as teams implemented these different tools and transitioned between them over time, each tool brought its own way to automate application deployments.

Almost all teams we talk to use these tools to address infrastructure automation requirements and cover application deployment, and that’s where it gets complex.

Automating application deployment across multiple infrastructure, teams, and tools creates complexity for developers, where deploying, managing, and supporting their applications across these different platforms becomes time-consuming.

And this leads us to where we are today.

Where things are at today

Unless you are part of a relatively new company that was “born in the cloud,” there is a big chance that your company is not 100% on cloud-native technologies and workflows. Words like Kubernetes and GitOps are more goals to achieve in the future than your current reality.

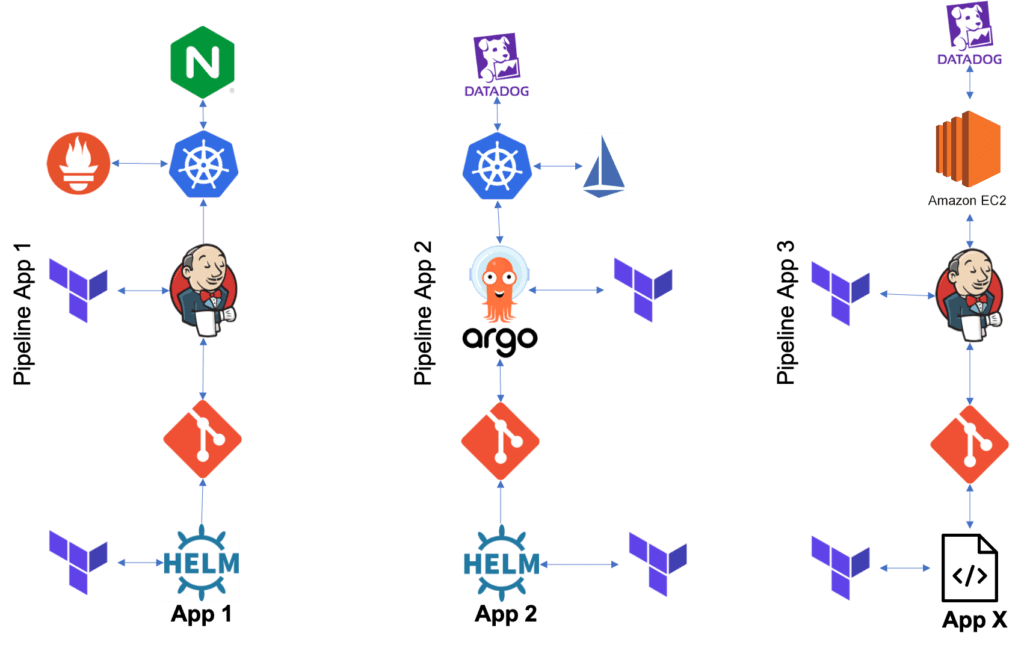

The reality is that most companies are still running applications on Virtual Machines, either on-premises or in the cloud. They are still deploying many of these applications using multiple CI pipelines, Terraform, and more.

For application deployment, developers have to use different Terraform or custom scripts, and once deployed, it becomes a challenge to support them properly. This complexity in the pipeline, automation, and lack of application control causes friction between developers and operations teams, impacts developer speed, application support time, and more.

Not only that but as the number of developers, applications, and environments grow, maintaining control and security of these applications becomes almost impossible.

It is not uncommon to see things like this:

Have we been looking at it from the wrong perspective?

Over the years, we have seen a lot of focus on control planes that would automate the provisioning of infrastructure and services. Still, if you pay close attention, the application layer was never the focus, and teams always tried to adapt these tools to make them work with their applications.

Now, the words of the day are Kubernetes and GitOps. You will have to, once again, build different pipelines, different security controls, and more to adopt it.

These will force you to end up managing even more environments, applications sprawl everywhere, lack of unified control and security, and your developers will always see the platform or DevOps engineering team as a bottleneck rather than an enabler.

There is no denying that this is a growing problem, and it will continue to grow. To fix that, we see many organizations “throwing more people and tools at the problem” to see if they can fix it.

Current solutions

Internal Developer Platform, or IDP, is growing in popularity because many organizations are building platforms to abstract the infrastructure away from the developers to enable their teams to ship applications faster and operate them better.

We often see companies abandoning these initiatives after months or even years of effort, sizable financial investment, and constant downtime and security breaches. They realize that building a dashboard for their developers won’t fix the problem, but instead, it will just increase the pain and costs, and it’s not a core value that their customers will see.

When we sat down with these companies to understand what they were trying to do, it came down to:

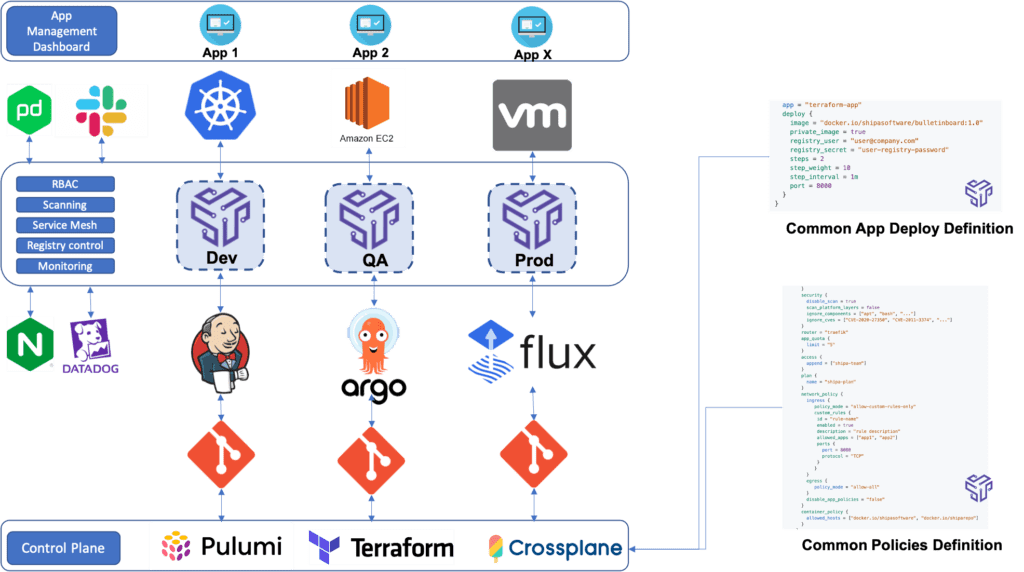

- Build a common application operating model that can enable developers to deploy their applications regardless of the control plane chosen for their projects, CI/CD pipelines, and more.

- This application operating model should enable the DevOps or platform engineering team to change control planes (Kubernetes, VMs, GitOps, cloud provider,…) as they wish but without impacting developers and how they deploy, manage, and support their applications.

- The application operating model should be flexible enough to enable platforms in their existing stack to plug into it and consume that model, allowing them to establish that model as a standard within their organizations.

- Security and controls should be centrally defined by the DevOps or Platform Engineering team. Whatever platform is plugged into the model will consume that control layer. Every application deployed will have those controls automatically enforced without requiring developers to learn and deal with those.

- The application operating model should automatically create the required resources for the applications to run based on the underlying infrastructure plugged into it.

Defining a New Category

Looking at the wishlist above, Infrastructure as Code platforms or infrastructure control plane tools will not deliver these requirements.

For teams to be successful in delivering an automated infrastructure AND an application layer that will provide on the promise above, a new Application Operating Model (AppOps) should be used in conjunction with the infrastructure tools they currently have and the ones that will come in the future.

AppOps should make the underlying infrastructure, CI pipeline, or control plane irrelevant to how developers deploy, manage, and secure their applications.

We have delivered several talks and presentations over the past year where we talk about “Kubernetes should disappear” or “How Kubernetes will disappear, and your developers won’t care.” We are now seeing other presenters repeat the same message, which reinforces this need and vision.

We built Shipa because we believe that for teams to succeed not only today but moving forward, an Application Operations category had to be created. We want to position DevOps and platform engineering teams as critical enablers in their organizations, equipped with the tools to enable velocity, security, and control.

Application Operation should be seen as a category rather than simply a tool. Other categories such as Continuous Integration, Application Performance Management, Application Security, and others should be adjacent to it. By integrating them, teams should have a unified operating model focused on their application.

The lack of a platform that created this category forced teams to glue tools from the adjacent categories together, resulting in a clunky system, hard to maintain, that impacted developers and, ultimately, the speed of delivering and supporting applications.

If we look back at the current scenario and compare it with what we are seeing users building with Shipa, we see this instead:

What’s Next?

We are in the early stages of building a solid category, but the initial adoption has been nothing short of amazing.

As we continue to see adoption growing and new use-cases addressed by users leveraging Shipa, this vision will keep evolving but continuously focusing on operationalizing how you deploy, manage, support, and secure applications across heterogeneous infrastructure.